Table of Contents

- From Soulless to Standout: Benchmarks & Workflows for AI‑Assisted Content

- 1. Quantitative Quality Benchmarks for AI‑Assisted Content

- 2. Recommended AI Tools for Content Creation and SEO

- 3. A Simple End‑to‑End Validation Workflow You Can Steal

- Conclusion: Turn AI from Risk into a Ranking Advantage

From Soulless to Standout: Benchmarks & Workflows for AI‑Assisted Content

AI can help you publish faster, but it can also flood your site with bland, risky content. The difference between soulless and standout often comes down to one thing: how you measure and edit what the AI gives you. That is where clear benchmarks, smart tools, and tight workflows matter.

At Lyfe Forge, we focus on AI‑assisted content that still feels human, ranks in search, and supports real business goals. In this guide, you will learn three things: the key quality metrics for AI content, which tools actually help with SEO and validation, and a simple end‑to‑end workflow you can use today.

1. Quantitative Quality Benchmarks for AI‑Assisted Content

Good AI‑assisted content starts with clear metrics. You cannot improve what you do not measure, and “this feels okay” is not a reliable QA process. Modern evaluation frameworks treat quality as multi‑dimensional, not just “is this fluent English?” or “did we hit 1,500 words?”.

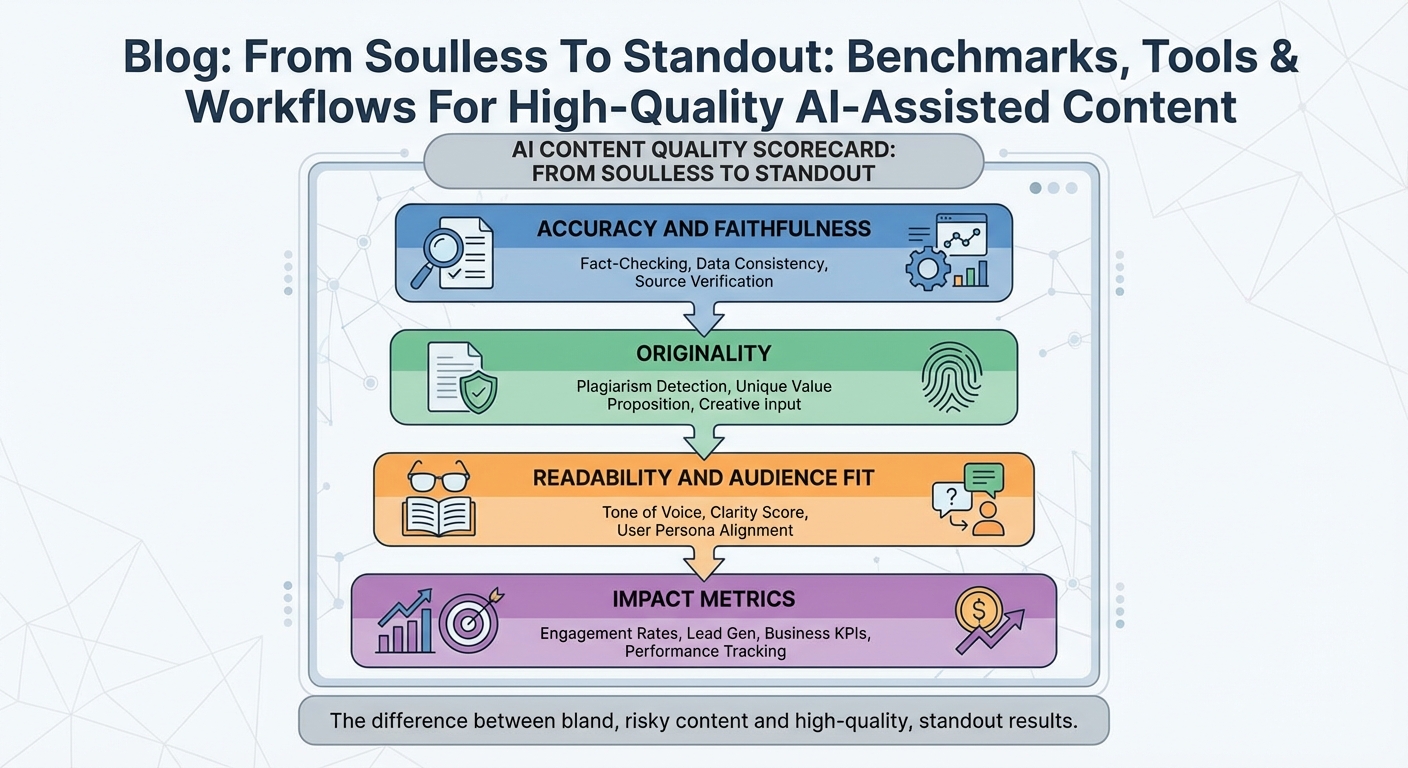

A practical scorecard for AI content usually covers four layers:

- Accuracy and faithfulness – Does each factual claim match trusted sources? Recent research on faithfulness metrics shows that automatic scores like BLEU and ROUGE, which track text overlap with references, are useful but insufficient on their own and need to be paired with targeted fact checks, because surface similarity does not guarantee correctness or honesty in AI output. According to Faithfulness in Natural Language Generation: A Survey, hybrid human – AI As highlighted in Faithfulness in Natural Language Generation: A Survey, automated metrics alone (like BLEU and ROUGE) are not sufficient to reliably detect hallucinations, and the authors instead emphasize the importance of dedicated human evaluation when assessing factual faithfulness.

- Originality – Is the draft genuinely unique, or a paraphrase of the top search results? Teams pair plagiarism scanners with semantic similarity tools to avoid both direct copying and “template” content that adds no new value.

- Readability and audience fit – Instead of trusting a single score like Flesch, leading frameworks cross‑check readability metrics with human judgments. Recent guidance and research show that traditional readability formulas are only rough estimates of text complexity and miss factors like logical flow, coherence, and findability, so you need both the numbers and a human pass to confirm that tone, structure, and complexity genuinely match your target audience’s needs—especially when you combine classic scores with modern NLP models that better approximate real reader experience. often correlate poorly with real reader experience, so you need both numbers and manual review to confirm that tone, structure, and complexity match your target audience’s needs, as echoed in recent readability research for modern NLP.

- Impact metrics – Beyond the text itself, high‑maturity teams measure search performance (rankings, organic clicks, snippets), engagement (scroll depth, time on page, bounce), conversions, and even brand impact (mentions, sentiment, and trust).

This shift toward holistic evaluation mirrors broader AI research. A number of studies argue that accuracy‑only metrics are not enough; you should also track how AI content affects decisions, trust, and business outcomes over time. That is why Lyfe Forge bakes both text‑level and outcome‑level signals into our scoring, instead of chasing a single “perfect” metric, and why our AI SEO content safety framework leans heavily on measurable benchmarks.

2. Recommended AI Tools for Content Creation and SEO

Once you know what to measure, the next question is simple: which tools actually help you hit those benchmarks? The Australian market has quickly moved beyond simple “AI writers” toward integrated stacks that blend generation, SEO analysis, and quality control.

On the evaluation side, a different family of tools focuses on metrics like BLEU, ROUGE, and perplexity to benchmark language models. A technical guide on LLM evaluation metrics made easy explains how these scores help compare models on tasks such as translation or summarisation, while also warning that they cannot capture everything that matters to human readers. In practice, Australian agencies using these metrics for QA pair them with human editorial review and task‑specific checks for factual accuracy.

There is also a growing niche of AI‑native quality platforms. Some, like RobotSpeed and RankAI, use AI‑driven analysis to assess readability, originality signals, and search‑intent alignment at scale. Others plug directly into CMS workflows to score each piece before it goes live. In In AU, this trend mirrors official Australian Government guidance on using public generative AI tools, which explicitly stresses human judgment, oversight, and accountability; teams are directed to check outputs for fairness, accuracy, and potential bias rather than trusting AI blindly.

Lyfe Forge vs Jasper, Copy.ai & others sits on top of this tool ecosystem, acting as the “orchestra conductor.” We integrate generation, SEO insights, and validation rules into one opinionated workflow, so marketers do not have to stitch together half a dozen dashboards just to decide whether a single article is safe to publish.

3. A Simple End‑to‑End Validation Workflow You Can Steal

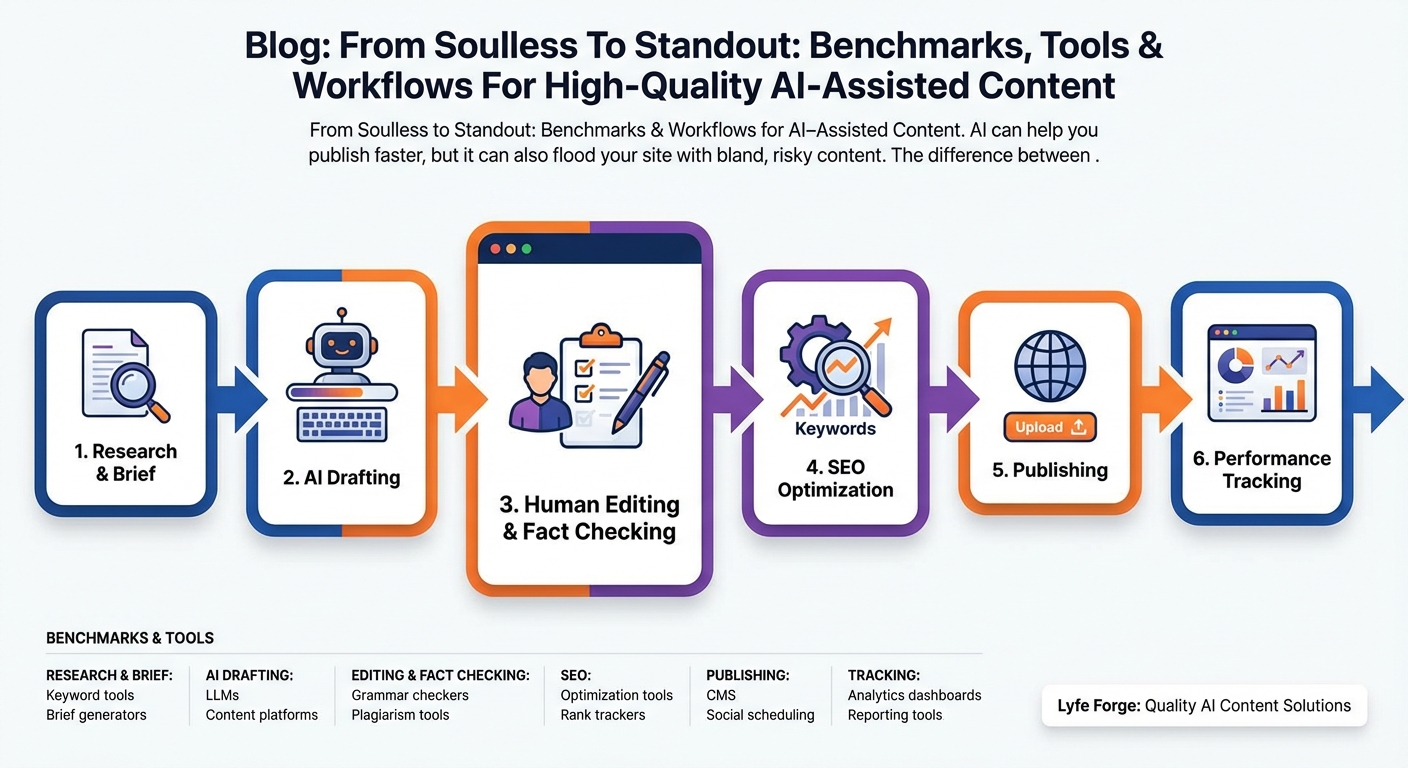

Tools and metrics are useful, but they only pay off when you put them into a repeatable process. Recent frameworks from content and SEO platforms outline a seven‑step loop that works well for AI‑assisted content; Lyfe Forge adopts the same pattern, with a few extra guardrails for Australian businesses.

- Strategy and briefing – A human defines the search intent, target audience, and business goal for each piece. You also capture Australian nuances here: local spelling, examples, and any compliance rules (such as privacy or advertising standards) that must be respected, using references like your own privacy policy and cookie policy as guardrails.

- AI draft generation – An AI engine creates a structured draft based on the brief, shaped for SEO from the start. Instead of pushing raw model output into your CMS, Lyfe Forge keeps drafts in a sandbox where every paragraph is still open to change.

- Automated pre‑checks – Before a human editor touches the draft, it passes through AI‑native scoring for readability, originality, and intent alignment. Pieces that fail thresholds are automatically flagged for regeneration or deeper edits. Modern systems also plug in hallucination‑detection tools and faithfulness metrics to catch unsupported claims early, reducing the manual load on editors.

- Human editorial review – A trained editor reviews the piece against a short checklist: fact accuracy, clarity, structure, tone, and local relevance. They rewrite weak sections, add real examples, and ensure the article says something new rather than remixing existing SERPs. Research on human – AI collaboration keeps showing the same pattern: the best results come from humans steering the work, not fixing it at the last minute.

- On‑page SEO optimisation – Now you refine headings, internal links, schema, and semantic coverage using tools like Surfer SEO or Semrush. The aim is not to chase a perfect content score, but to make sure the piece answers the query better than anything else on page one while still reading naturally, something your content library and key landing pages can be mapped against over time.

- Publish and monitor – Once live, you monitor rankings, organic traffic, time on page, and conversion actions. For AI‑assisted pages, you also track which workflow created them (AI‑first vs human‑first) so you can compare performance honestly over time rather than relying on one‑off anecdotes.

- Continuous improvement – Finally, you run A/B tests and scheduled content reviews. Under‑performing pieces may get fresh research, new sections, or even a full rewrite. This feedback loop lets your quality benchmarks evolve alongside Google’s algorithms and your own audience data.

Guides on AI content quality control stress that this sort of loop is the only reliable way to scale AI usage without burning trust or search visibility. One 2026 overview of AI content quality‑control workflows highlights the need to combine automated checks with human judgement at multiple stages, rather than treating human review as a single, rushed final step.

Lyfe Forge encodes this whole workflow into our platform. Briefs, drafts, checks, and performance data live in one place, so your team can move quickly without losing track of what is actually working.

Conclusion: Turn AI from Risk into a Ranking Advantage

AI‑assisted content does not have to feel generic or risky. With clear quantitative benchmarks, the right mix of SEO and validation tools, and a disciplined end‑to‑end workflow, you can publish at scale while still earning clicks, leads, and trust.

Lyfe Forge exists to make that path easier for Australian teams. We bring strategy, generation, QA, and performance tracking together so you can move past one‑off “AI experiments” and into a stable, measurable content engine. If If you are ready to turn AI from a gamble into a growth channel, now is the time to build your benchmark‑driven workflow – and we would love to help you design it with our AI content platform, clear terms of service that support commercial use, and a start‑free pricing option designed with Australian businesses in mind.

Frequently Asked Questions

What are quantitative quality benchmarks for AI-assisted content?

Quantitative quality benchmarks are measurable criteria you use to judge how good your AI-assisted content is. Common benchmarks include accuracy and faithfulness to sources, originality, readability and audience fit, and SEO performance. Using a scorecard across these dimensions helps you systematically improve drafts instead of relying on gut feel.

How do I make sure AI-generated content is accurate and not hallucinated?

Start by fact-checking all key claims against trusted, up-to-date sources instead of trusting the AI by default. Use tools that highlight factual entities and external links, then pair them with manual review for high-impact sections. Research shows automated overlap metrics like BLEU and ROUGE aren’t enough on their own, so human validation is essential for critical or technical topics.

How can I check if my AI content is original and not just rephrased from Google results?

Run your draft through both a plagiarism checker and a semantic similarity tool that compares your text to top-ranking pages. Plagiarism tools catch direct copying, while semantic tools flag overly similar structures or ideas. If the piece looks like a template of existing results, add new angles such as original data, examples, or brand-specific insights.

What metrics should I use to measure readability for AI-written content?

You can use classic readability formulas like Flesch or Flesch-Kincaid as a starting point, but don’t rely on them alone. Combine these scores with tools that assess coherence and structure, then have a human editor check tone, clarity, and flow for your specific audience. The best workflows cross-check numbers with real reader feedback or internal subject-matter reviewers.

What is a good workflow for creating high-quality AI-assisted content from start to finish?

A practical workflow is: research and outline first, then use AI to draft based on that outline and clear prompts. Next, run the draft through your benchmark checks (accuracy, originality, readability, SEO) and revise manually where it fails. Finally, have a human editor polish for voice, brand alignment, and business goals before publishing and monitoring performance.

Which AI tools are best for SEO-focused content creation and validation?

Use one set of tools for ideation and drafting (LLMs, brief generators) and another for validation (plagiarism checkers, fact-checking aids, on-page SEO analyzers). Look for SEO tools that evaluate search intent, headings, internal links, and entity coverage rather than just keyword density. Lyfe Forge typically combines AI writing tools with specialized SEO suites and custom scorecards to cover both creation and quality assurance.

How is AI-assisted content different from fully AI-generated content?

AI-assisted content uses AI to support parts of the process—like drafting, outlining, or rephrasing—while humans control research, strategy, editing, and final approval. Fully AI-generated content usually skips deep human intervention, which increases the risk of inaccuracy, generic writing, and weak alignment with brand and business goals. Lyfe Forge’s approach keeps humans in the loop at all critical decision and quality checkpoints.

Can AI-written content still rank in Google if it is clearly labeled as AI-assisted?

Google’s guidelines focus on content quality, usefulness, and intent, not on whether AI was involved. AI-assisted content can rank very well if it is accurate, original, and satisfies search intent better than competing pages. The key is strong human editing, clear benchmarks, and SEO-aware structure, not hiding the role of AI.

How can I tell if my AI content is good enough to publish on my business site?

Check it against a simple scorecard: are all key facts verified, is the piece clearly differentiated from top results, does it match your brand voice, and does it directly support a specific business goal or user task? If it fails any category—e.g., feels generic, misses intent, or has weak calls to action—send it back through revision. Many teams work with partners like Lyfe Forge to design and enforce these publishing thresholds.

How does Lyfe Forge help businesses implement AI content workflows safely?

Lyfe Forge designs custom workflows that combine AI tools with human review at each high-risk step, from research and outlining to final QA. They set quantitative benchmarks for quality, integrate the right SEO and validation tools, and train your team on prompts and processes that keep content human-centric and compliant. This lets you scale publishing speed without sacrificing accuracy, brand voice, or search performance.