Table of Contents

- AI, E‑E‑A‑T, and Multi‑Source Research: A Strategic SEO Playbook

- E‑E‑A‑T in SEO: Strategic Quality Framework, Not a Switch

- The Evolution from E‑A‑T to E‑E‑A‑T in an AI‑First Era

- Experience, Expertise, Authoritativeness, Trust: Four Pillars, Different Risks

- YMYL vs Other Topics: Two Different Risk Profiles

- How Search Quality Raters and E‑E‑A‑T Influence Rankings Indirectly

- Common Misconceptions About E‑E‑A‑T (and Why They’re Dangerous)

- Practical Ways to Apply E‑E‑A‑T in Your AI Content Strategy

- Conclusion: Choose the Kind of AI SEO Strategy You Want to Run

AI, E‑E‑A‑T, and Multi‑Source Research: A Strategic SEO Playbook

Artificial intelligence has made it cheap, fast and dangerously easy to publish oceans of mediocre content. For Australian leaders trying to protect search visibility and brand reputation, relying on volume or clever prompts alone is now a high‑risk bet.

The framework that quietly decides who wins or loses in this AI‑saturated landscape is E‑E‑A‑T: Experience, Expertise, Authoritativeness and Trustworthiness. Google’s ranking systems are designed to reward original, high‑quality content that demonstrates E‑E‑A‑T, regardless of whether it’s created with human effort, AI, or a mix of both, as long as it’s not primarily generated to manipulate search rankings. which means smart use of AI can help you scale – but only if you treat E‑E‑A‑T as a strategic quality and risk lens, not a buzzword. This series unpacks what that actually means for your SEO decisions, how E‑E‑A‑T evolved, and why multi‑source research and human judgement now sit at the heart of durable organic growth, especially when paired with an AI content platform that can execute consistently.

E‑E‑A‑T in SEO: Strategic Quality Framework, Not a Switch

There’s no official Google “E‑E‑A‑T score” you can toggle on in a CMS or buy from a tool vendor—any such score is just a third‑party approximation of how well your content aligns with Google’s E‑E‑A‑T principles, not a metric Google itself uses. E‑E‑A‑T comes from Google’s Search Quality Rater Guidelines and is applied by human reviewers to evaluate how well search results demonstrate experience, expertise, authoritativeness, and trustworthiness, with extra scrutiny on sensitive “Your Money or Your Life” topics. Their feedback helps train Google’s systems over time, so content that demonstrates strong E‑E‑A‑T signals is more likely to surface in search results, including AI Overviews, but there is no single ranking factor or dial labelled “E‑E‑A‑T.” Independent explanations of E‑E‑A‑T make the same point: it is a lens, not a numeric metric.

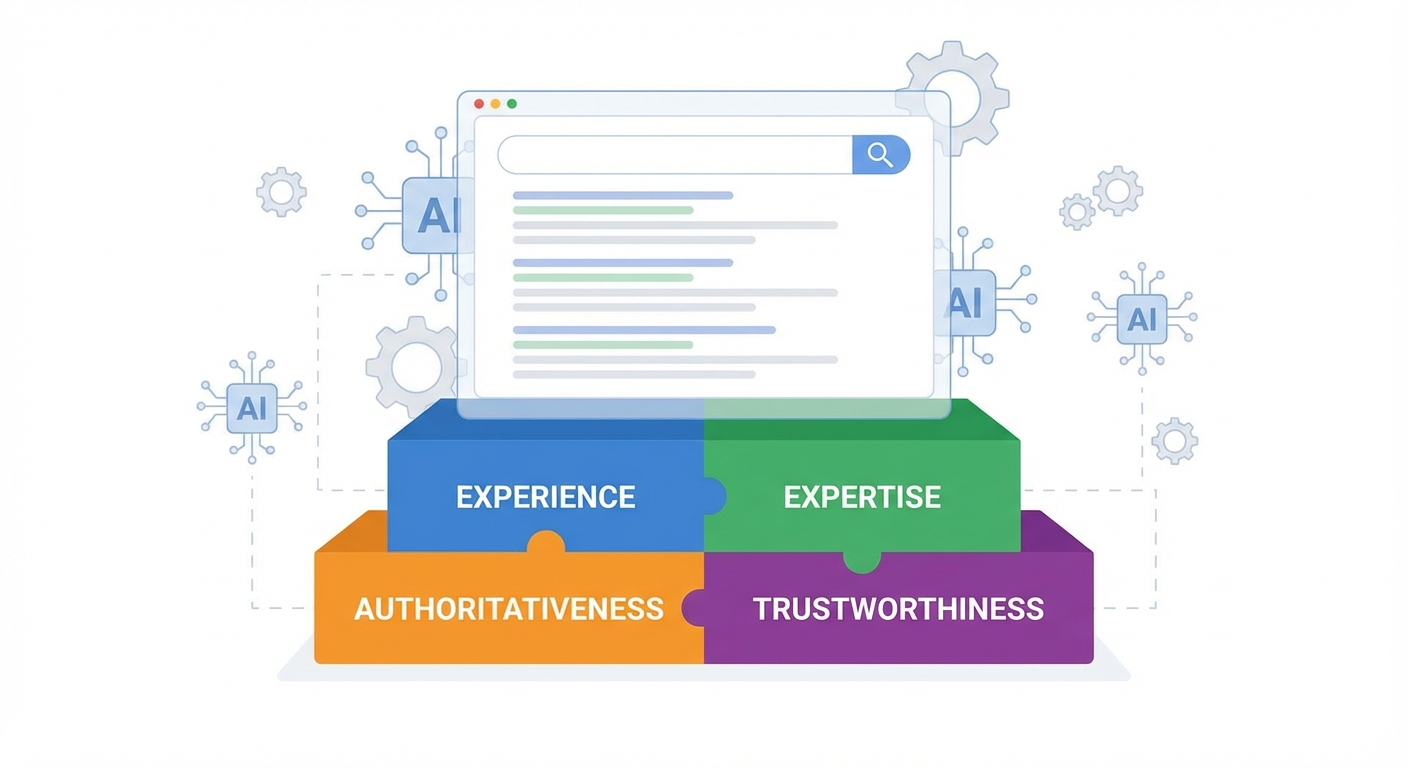

For marketing and product leaders, that shifts E‑E‑A‑T from a technical SEO tweak to a governance question. You are deciding what kind of information your brand is prepared to publish at scale, and how that will look under an external spotlight. Experience means the writer has actually done the thing they are describing; expertise brings depth of knowledge and currency with standards; authoritativeness reflects how the broader web and your audience see you; and trustworthiness underpins everything through accuracy, transparency and ethical conduct.

In practice, this framework guides day‑to‑day choices: which topics you should (and should not) cover, what level of subject‑matter review is required before publishing, when AI drafting is acceptable, and how strictly claims need to be referenced. Treated this way, E‑E‑A‑T becomes a decision filter for your entire content operation, shaping not just rankings but brand risk exposure in an era when AI search results amplify both strengths and weaknesses in your content footprint, a reality that platforms like the Lyfe Forge blog build into their publishing approach.

The Evolution from E‑A‑T to E‑E‑A‑T in an AI‑First Era

Around 2014–2015, Google introduced the E‑A‑T (Expertise, Authoritativeness, Trustworthiness) framework into its Search Quality Rater Guidelines as a way for human assessors to evaluate the quality of search results, with especially high scrutiny on sensitive “Your Money or Your Life” topics. Those scores never changed rankings directly, but they gave engineers a target: tweak the algorithms until they surface pages that look, to humans, like they have strong E‑A‑T. Industry‑standard guides trace this same historical arc.

From 2018 onwards, core updates—most notably the August 2018 “Medic” core update—turned up the dial on E‑E‑A‑T for health, finance and other YMYL (Your Money or Your Life) topics. Sites that lacked clear expertise or that contradicted established consensus saw traffic evaporate. This was less about punishing individual publishers and more about training the system to avoid amplifying advice that could cause serious harm.

Then, in December 2022, Google added a second “E” for Experience to its Search Quality Rater Guidelines. On paper that change was subtle; in practice, profound. It acknowledged that lived, first‑hand involvement can be as important as formal credentials – think a detailed solar installation guide written by a licensed electrician who has done hundreds of jobs, or a small business cash‑flow article grounded in the author’s own P&L pain. At the same time, generative AI tools went mainstream, flooding the web with passable but generic content stitched together from existing pages.

Put bluntly, E‑E‑A‑T evolved because Google needed stronger, more nuanced signals to separate trustworthy, experience‑rich material from re‑hashed summaries. For brands, this history matters: it tells you where Google is heading. Future updates are highly likely to keep rewarding sites that combine technical SEO competence with genuine human experience and demonstrated care for user safety, rather than those chasing quick wins with indistinguishable AI‑written articles – a pattern echoed in recent analyses of AI‑generated content and SEO.

Experience, Expertise, Authoritativeness, Trust: Four Pillars, Different Risks

Although the four letters sit neatly side by side, they solve different problems for Google and for your organisation. Experience is about first‑hand involvement. Has the person actually implemented a migration to GA4, switched CRMs, or managed paid campaigns with a similar budget? Content that includes concrete details, outcomes, screenshots or photos from real work sends this signal clearly.

Expertise speaks to depth and accuracy. In regulated or technical domains – tax law, pharmacology, cyber security – this often implies formal credentials, current knowledge of standards, and the ability to reference primary sources rather than second‑hand summaries. In more everyday topics, it may look like years of practice and a track record of solving the same user problem repeatedly.

Authoritativeness is socially constructed. It reflects how the wider ecosystem treats you: do reputable sites link to your guides; do journalists quote your spokespeople; is your brand mentioned on panels or in industry round‑ups; do you consistently cover a topic area in more depth than competitors? Building this is slower and more fragile than it appears, which is why short‑term tricks for earned links rarely age well.

Trustworthiness, however, is the non‑negotiable base. Google’s own Search Quality Rater Guidelines are blunt: trust is the most important part of E‑E‑A‑T, and pages deemed untrustworthy are rated as having low E‑E‑A‑T no matter how otherwise impressive they appear. That includes technical security (HTTPS), clear contact details, transparent authorship, honest claims, and corrections when errors are found. Misleading testimonials, unsubstantiated performance claims, or opaque affiliate relationships are not just bad SEO – they are business liabilities that E‑E‑A‑T will increasingly surface rather than hide, a point echoed in specialist breakdowns of EEAT as a trust framework.

YMYL vs Other Topics: Two Different Risk Profiles

Google does not treat every topic equally. YMYL subjects – topics that can significantly impact someone’s financial stability, physical or mental health, safety, or civic participation – are held to much stricter quality and trust standards in Google’s systems and quality evaluations. A guide on treatment options for depression, or advice on SMSF setups, needs strong evidence, alignment with expert consensus, and very careful wording. Low‑quality or misleading material is more likely to be suppressed, hit in core updates, or simply never gain traction.

For non‑YMYL areas – hobbies, lifestyle tips, entertainment – the standard is still meaningful, but less clinical. Google is more comfortable surfacing everyday experience, even when the author lacks formal qualifications, as long as the content is honest, useful and not pretending to be something it is not. A camping gear review by a weekend hiker can perform well if it is detailed and transparent about limitations.

Strategically, this split should drive your AI and content policies. For high‑risk categories, AI can assist research and drafting, but you need tight controls: clear sign‑off from qualified practitioners, strong referencing, conservative language around outcomes and timelines. For lower‑risk topics, you can be more experimental with AI‑assisted ideation and narrative, provided you still anchor pieces in real experience and accurate information. The mistake many teams make is treating all pages as if they sit in the same risk bucket. E‑E‑A‑T gives you the vocabulary to codify that difference into policy rather than relying on ad‑hoc judgement calls, something you can formalise inside your own content operations and governance structures.

How Search Quality Raters and E‑E‑A‑T Influence Rankings Indirectly

Another persistent confusion in SEO circles is how Google’s quality raters actually interact with rankings. Raters are human reviewers around the world who follow a detailed, publicly available handbook to evaluate how well search results meet user queries and to assess the overall quality, relevance, and trustworthiness of those results. They do not sit there manually boosting or demoting individual pages. Instead, their assessments generate large datasets that machine‑learning systems use to learn what “good” and “bad” results look like across billions of queries.

Within that handbook, E‑E‑A‑T provides the language raters use to justify their scores. If a result on a financial query lacks clear author information, relies on vague claims, or contradicts established guidance without explanation, it is likely to be judged as lower quality. Over time, algorithm updates are tested against these ratings: if a new model tends to surface pages raters call “high E‑E‑A‑T” more often, it is considered an improvement.

For businesses, this indirect pathway has two important implications. You cannot “game” E‑E‑A‑T with surface‑level signals alone – empty author bios, stock photos of doctors, or badge‑heavy footers will not rescue content that is thin or misleading. And changes you make today may not show up in rankings until future updates are rolled out and re‑evaluated against rater data. That delay tempts teams to revert to short‑term tactics, but it also means that consistent investment in quality can pay off dramatically when the next round of adjustments goes live. Seen through this lens, E‑E‑A‑T is less a secret ranking factor and more a long‑term contract: align with it patiently, and you are training the system to see your site as a safe, valuable choice in an increasingly noisy ecosystem – especially if your AI tooling respects guardrails such as Google API usage policies and maintains a clean, crawlable structure via assets like an up‑to‑date post sitemap.

Common Misconceptions About E‑E‑A‑T (and Why They’re Dangerous)

Because the language around E‑E‑A‑T is abstract, myths spread quickly. One common misunderstanding is that E‑E‑A‑T is a simple on‑page checklist. Teams dutifully add author headshots, sprinkle a few citations, and assume the job is done. In reality, raters evaluate the substance of the content, the site’s overall reputation, and even how the brand is talked about elsewhere. Cosmetics help only if they reflect genuine substance.

A second misconception is that E‑E‑A‑T is irrelevant for “non‑serious” topics. While the formal bar is lower outside YMYL areas, users still reward clarity, honesty and depth. Search systems, trained on those preferences, do the same. A gaming guide that clearly reflects hundreds of hours of play will almost always outperform a hastily assembled AI summary, even when no one’s health or savings are on the line.

The third, and perhaps most dangerous, myth in the AI era is that if Google says it does not punish AI content, anything generated by a large language model is fine to publish as‑is. Google’s publicly stated position is more nuanced: it rewards original, high-quality content that demonstrates E-E-A-T “however it is produced,” and it explicitly warns that using automation, including AI, primarily to manipulate search rankings or generate low-quality content at scale violates its spam policies. That puts the onus squarely on you to design processes where AI amplifies human judgment rather than replacing it, a nuance reinforced in recent discussions of E‑E‑A‑T‑aligned optimisation for AI search.

Leaders who misunderstand these points tend to under‑invest in subject‑matter review, over‑invest in content volume, and then act surprised when a core update wipes out a large slice of their traffic. Those who treat E‑E‑A‑T as an ongoing quality and risk programme – covering author selection, research methods, review workflows and post‑publication monitoring – tend to see slower but far more stable gains, particularly when combined with transparent user‑facing documentation like an accessibility statement that signals broader organisational trustworthiness.

Practical Ways to Apply E‑E‑A‑T in Your AI Content Strategy

Turning all of this into action starts with classification. Map your topics into risk tiers: high‑stakes YMYL areas, moderate‑risk subjects, and lower‑risk educational or lifestyle content. For each tier, define what level of experience, expertise and review is mandatory before anything goes live, and where AI is allowed to contribute (ideation, outlining, drafting, copy‑editing). Document this instead of relying on vague “use your judgement” guidance.

Next, redesign briefs to force E‑E‑A‑T into the draft itself. Ask writers and AI operators to include specific first‑hand examples, case details, numbers, or workflows rather than generic talking points. Require primary sources for any factual claims – industry reports, government sites, current standards – and insist that a human with relevant experience reads the piece with a “could this harm or mislead anyone?” lens before sign‑off, aligning with the kind of E‑E‑A‑T‑aware briefing advocated in AI search optimisation guides.

Finally, build E‑E‑A‑T into your measurement cadence. Go beyond rankings and traffic; track accuracy corrections, legal escalations, user feedback, and brand mentions. When something does well, look at the four pillars and ask which elements were especially strong. When something tanks after an update, review it through the same lens instead of reaching straight for more backlinks or new keywords. Over time, you will develop a house style where AI is a powerful research and drafting engine, but responsibility for quality and safety remains firmly human, even if you are using structured, policy‑driven tools such as subscription‑based AI content suites to scale production.

Conclusion: Choose the Kind of AI SEO Strategy You Want to Run

You can treat AI as a content vending machine and hope Google does not notice, or you can treat E‑E‑A‑T as the operating system for a safer, more durable search strategy. In a world where anyone can publish at scale, what sets winning brands apart is not access to tools, but the discipline to pair those tools with real experience, genuine expertise, earned authority and uncompromising trust, ideally supported by a platform like Lyfe Forge that bakes governance and quality into everyday workflows.

From here, the work shifts from principle to execution: designing AI + human workflows that stand up to scrutiny, and showing how smaller or local brands can still carve out trusted positions in search without resorting to risky shortcuts, particularly when they start with a transparent terms framework and an AI engine designed for sustainable growth rather than quick hacks.

Frequently Asked Questions

What does E‑E‑A‑T mean in SEO and why is it important in the age of AI content?

E‑E‑A‑T stands for Experience, Expertise, Authoritativeness and Trustworthiness, and it’s the lens Google uses to judge whether content is genuinely helpful or just search‑engine spam. In an AI‑saturated environment where anyone can mass‑produce average articles, E‑E‑A‑T helps determine which content deserves visibility, especially on sensitive “Your Money or Your Life” topics. Brands that treat E‑E‑A‑T as a strategic quality framework, not a buzzword, are far more likely to earn durable organic growth.

Is there an official Google E‑E‑A‑T score or tool I can use to rank higher?

No, Google does not use or expose any kind of official “E‑E‑A‑T score.” Any E‑E‑A‑T metric you see in SEO tools is a third‑party estimate of how well your content aligns with Google’s guidelines, not a direct ranking factor. Google uses human quality raters to evaluate search results and train its systems, so you need to build strong real‑world signals of experience, expertise, authority and trust, rather than chase a single score.

Can I use AI content and still meet Google’s E‑E‑A‑T guidelines?

Yes, Google is fine with AI‑assisted content as long as it is original, accurate and primarily created to help users, not just manipulate rankings. The risk isn’t the AI itself but publishing unchecked, generic outputs with no subject‑matter oversight, sources or brand accountability. Combining an AI content platform like Lyfe Forge with proper human review, fact‑checking and multi‑source research allows you to scale content while still demonstrating strong E‑E‑A‑T.

How do I improve E‑E‑A‑T on my website in a practical way?

Start by aligning topics with real experience and expertise in your team—don’t publish thin content on areas you don’t understand. Add clear author bios, cite multiple credible sources, and implement a content review process that checks accuracy, recency and compliance, especially for YMYL topics. Lyfe Forge helps by systematising research, AI drafting and human review into a repeatable workflow so you can enforce E‑E‑A‑T standards at scale.

What is multi‑source research and how does it support E‑E‑A‑T and SEO?

Multi‑source research means drawing on several independent, credible sources instead of relying on a single article, tool output or AI response. It strengthens E‑E‑A‑T by showing you’ve cross‑checked claims, stayed current with standards and avoided parroting misinformation. Lyfe Forge’s platform can be configured to pull from curated source sets and then route drafts through human judgment, so your content reflects deeper, more reliable research.

How is E‑E‑A‑T different from traditional technical SEO?

Technical SEO focuses on crawlability, site speed, structured data and similar implementation details, while E‑E‑A‑T is about the substance and governance of what you actually publish. You can have a technically perfect site that still underperforms if your content lacks real‑world experience, credible expertise or trust signals. Lyfe Forge typically works alongside your existing technical SEO setup, providing the strategy and workflows to upgrade content quality, not just the infrastructure.

Why is E‑E‑A‑T especially critical for Australian businesses and YMYL topics?

For Australian brands operating in finance, health, legal, education or government‑adjacent sectors, Google applies extra scrutiny because poor information can directly affect people’s money, safety or wellbeing. Demonstrating local expertise, compliant advice and transparent sourcing is essential to maintain both rankings and regulatory trust. Lyfe Forge helps leaders in these sectors design AI and content processes that respect E‑E‑A‑T and local risk requirements from day one.

What does Lyfe Forge actually do to help with E‑E‑A‑T and AI content governance?

Lyfe Forge is an AI content platform and strategic partner that builds customised workflows around your brand’s expertise, review requirements and risk appetite. It embeds multi‑source research, SME input, fact‑checking and sign‑off into the AI content process, so E‑E‑A‑T is enforced by design, not as an afterthought. This lets marketing and product leaders scale content production without sacrificing accuracy, trust, or long‑term search visibility.

How should I decide which topics my brand should and shouldn’t cover for E‑E‑A‑T?

Use E‑E‑A‑T as a governance filter: only pursue topics where you can show real practitioner experience, up‑to‑date knowledge and organisational accountability. Avoid chasing keywords in areas where you lack qualifications, data or legal permission to advise, especially on YMYL subjects. Lyfe Forge helps teams map content opportunities against in‑house expertise and risk profiles, so your SEO roadmap stays aligned with what you can credibly and safely publish.

Will focusing on E‑E‑A‑T help my content appear in Google’s AI Overviews?

While Google doesn’t guarantee inclusion, content that is original, accurate, clearly sourced and demonstrates strong E‑E‑A‑T is more likely to be selected as input for AI Overviews. This means well‑researched articles, expert perspectives and trustworthy brand pages can gain additional visibility beyond traditional blue links. By using Lyfe Forge to standardise those quality signals across your content library, you increase your chances of being surfaced in these new AI‑driven experiences.