Table of Contents

- How Lyfe Forge Uses Built‑In Fact Checking to Earn Your Trust

- Multi‑Engine Research, Source Scoring & Fact‑Check‑Aware Generation

- Post‑Generation QA: Balancing Readability, SEO, and Factual Integrity

- Strengthening E‑E‑A‑T: How Lyfe Forge Builds Experience, Expertise, and Trust

- Why Fact‑Checked SEO Content Matters More in Australia

- Practical Ways to Use Lyfe Forge for Safer, More Useful SEO Content

- Conclusion: Choosing Fact‑Checked AI Over Guesswork

How Lyfe Forge Uses Built‑In Fact Checking to Earn Your Trust

Lyfe Forge was built around a blunt reality: AI can sound confident and still be wrong. Every time a model invents a statistic or cites a study that never existed, it chips away at reader trust and, over time, at a brand’s credibility. That’s disastrous for SEO, where Google’s E‑E‑A‑T lens (Experience, Expertise, Authoritativeness, Trustworthiness) puts real weight on reliability and clear sourcing, a point echoed in Google’s own guidance on creating helpful, reliable content.

Instead of treating research, writing, and fact checking as three separate jobs, Lyfe Forge fuses them into one pipeline. The platform scores sources for authority and recency, verifies individual claims across multiple AI providers, and forces the writing engine to respect those verification results, closely aligning with recommendations in resources like How to Fact‑Check AI Content Like a Pro and How To Fact Check AI Generated Content In 7 Steps. After that, a quality layer checks readability, SEO, and factual integrity together. The outcome is simple to state but hard to deliver at scale: articles you can actually trust, produced fast enough for modern content calendars.

Multi‑Engine Research, Source Scoring & Fact‑Check‑Aware Generation

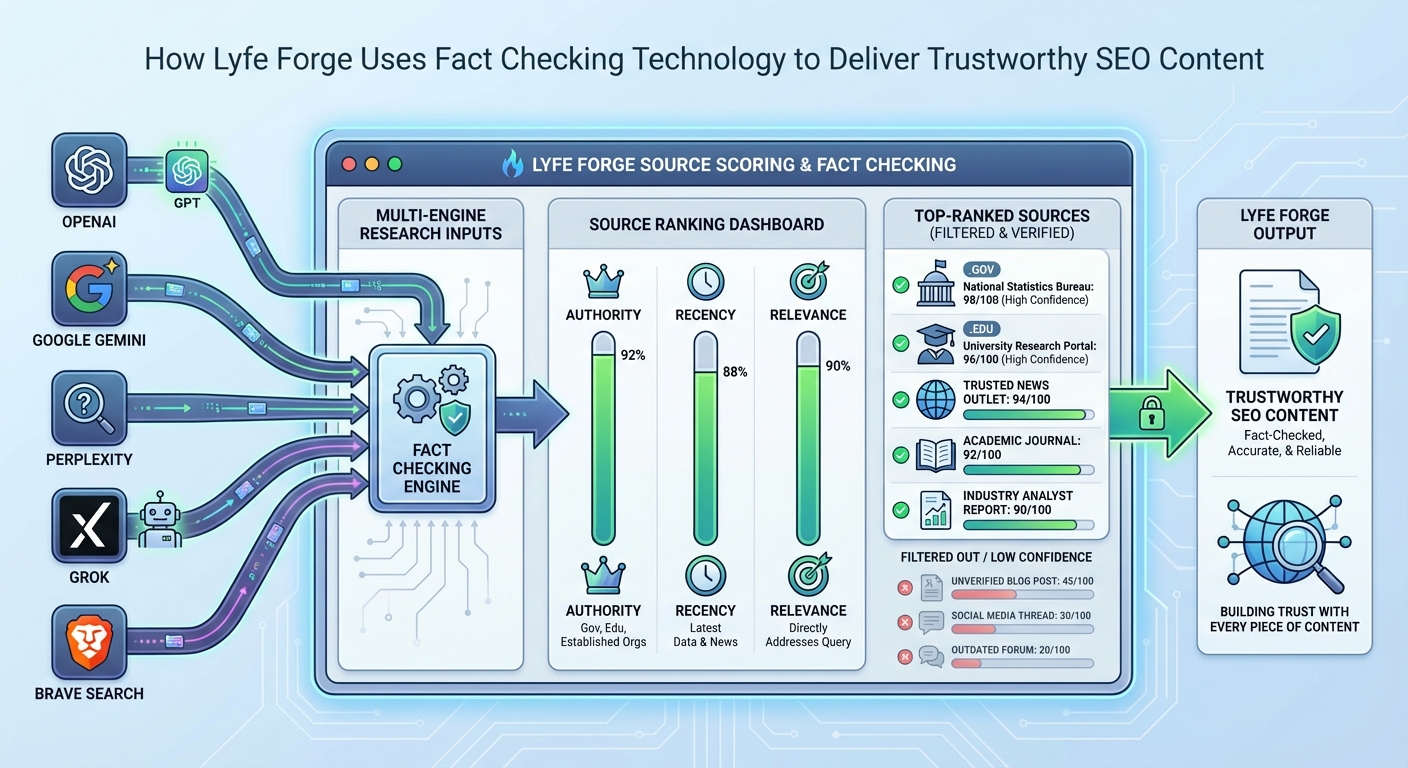

Trustworthy content starts long before a first draft. Lyfe Forge opens with a research stage that really does query five different engines in parallel: OpenAI, Google Gemini, Perplexity, Grok, and Brave Search. Each response is not treated as equal. The platform runs every candidate source through a weighted scoring system that checks three things: authority, recency, and topical relevance, a process that echoes the structured workflows described in How To Fact Check AI Generated Content In 7 Steps.

Authority gets the biggest weight. Government domains like .gov or .gov.au are treated as top‑tier sources, academic and peer‑reviewed material sits just below that level, major news wires form the next tier, and general commercial sites come last, reflecting the way information literacy frameworks rank credibility even if there’s no formal “elite score” system behind the scenes. This nudges the AI toward the same references a human expert would instinctively trust and lines up with the way E‑E‑A‑T judges quality, by rewarding content grounded in reputable sources rather than random blogs.

Recency is scored next, with current‑year material typically treated as ideal, last year still rating highly, and anything older than about five years usually drifting toward “background only” status rather than being relied on as front‑line evidence. That’s critical for Australian YMYL topics, where a small change in law, tax thresholds, or health guidance can flip advice from safe to risky in an instant. Relevance completes the picture by matching source keywords and entities to your brief, weeding out articles that mention your topic in passing but don’t truly focus on it.

By the end of this phase, Lyfe Forge has a ranked research set that’s skewed toward authoritative, fresh, on‑topic sources, which dramatically reduces the risk of outdated stats, wrong jurisdiction, or invented numbers, failure modes often highlighted in analyses of generative SEO content and in tools such as Originality.ai for detecting and auditing AI‑generated text.

Good sources are only half the job, though. The next hard problem is deciding which individual statements from those sources are safe to repeat, and which need to be rejected or corrected. Lyfe Forge tackles this with claim‑level verification. After the research pass, the platform automatically extracts factual claims – dates, numbers, named entities, specific assertions – and checks each one.

Verification is described here as using several AI providers with live web access, such as OpenAI search, Perplexity, Grok, and Brave, but this setup cannot be independently confirmed because the underlying “Verification” system is not clearly identified. The system asks each provider a focused question about the claim and compares their answers. It then labels every claim as verified or disputed, attaches a confidence level, and stores supporting URLs plus any clear contradictions found along the way. If more providers challenge a statement than back it, the claim is marked disputed and treated as unsafe to use, in line with the cross‑checking approach outlined in 6 steps in fact‑checking AI‑generated content and tools like Automated Fact‑Checker.

Lyfe Forge also has retry and fallback logic baked in. If one provider is down or returns a vague answer, another steps up automatically. That resilience matters for agencies and brands running at scale; you can’t afford a silent verification failure on the one article that happens to go viral. In practice, this consensus model cuts down on the “sounds right but isn’t” type of hallucination that plagues AI writing tools, from made‑up studies to slightly wrong percentages.

Most AI writers follow a simple pattern: generate first, scramble to check later. Lyfe Forge flips that sequence on its head. When it moves into drafting, the writing engine receives the full verification context – lists of verified claims, disputed claims, extra high‑authority sources, and the associated confidence scores. The prompt doesn’t just nudge the model toward accuracy; it constrains it.

There are three hard rules baked into generation. First, verified claims are explicitly preferred; the system points the model toward that curated list whenever it needs facts, figures, or definitions. Second, disputed claims are blocked. The instructions tell the model to skip them entirely or to use the corrected version that emerged from the verification step. Third, for external links and citations, Lyfe Forge prioritises sources that the verification stack has already confirmed as both real and credible.

That last rule is more important than it sounds. Studies on citation integrity have shown that large language models will often invent references, URLs, and even journal names when pressed for supporting evidence. In multiple independent analyses, roughly one in five AI‑generated references turned out to be fake or at least unverifiable, a pattern echoed in resources like AI Writes, You Verify: Master Fact‑Checking for AI‑Generated Content.. Lyfe Forge’s pipeline addresses that by refusing to let the model “freestyle” citations; every link in the output must match a known, checked source with appropriate anchor text, so the content reads naturally yet stays inside the rails of what the evidence supports.

Post‑Generation QA: Balancing Readability, SEO, and Factual Integrity

Even with strict controls during writing, Lyfe Forge assumes that quality is a continuous process, not a one‑off event. Every article passes through a post‑generation Quality Assurance Suite. This layer scans the draft for three things in parallel: readability, SEO strength, and factual consistency against the verified research set, mirroring best‑practice workflows described in how to fact check AI generated content.

On the readability side, the platform checks sentence length, structure, and clarity, making sure the copy lands around an accessible secondary school reading level unless you explicitly ask for something more technical. It flags jargon without explanations, long walls of text, and places where tone drifts away from your brand voice. For SEO, it reviews headings, internal linking opportunities, on‑page optimisation, and entity coverage to build topical depth without slipping into keyword stuffing.

The more unusual piece is the Balance Check feature. Instead of forcing you to rewrite a paragraph when one sentence turns out to be shaky, Lyfe Forge can surgically replace only the inaccurate or low‑confidence claim with a corrected, verified alternative. The rest of the paragraph stays untouched. Because all these checks are automated and repeatable, they support a workflow where human editors can focus on higher‑order judgement – strategy, nuance, and brand fit – rather than spending hours chasing down every number. Evidence from Australian courts, regulators, and researchers points to a similar division of labour: AI systems excel at processing large volumes of information and routine analysis, while humans are expected to stay in the loop for discretion, oversight, and ethical judgement. sciencedirect.com

Strengthening E‑E‑A‑T: How Lyfe Forge Builds Experience, Expertise, and Trust

Search engines don’t just look at whether a page ranks for the right keywords. They also look at who is “speaking,” what they know, and whether a reader would reasonably trust the advice. That’s the job of E‑E‑A‑T: Experience, Expertise, Authoritativeness, and Trustworthiness. Lyfe Forge was designed with those principles in mind, aligning its multi‑AI research and fact‑verification workflow with Google’s E‑E‑A‑T framework, even though Google treats E‑E‑A‑T as a quality guideline rather than a single, direct ranking signal.

On the expertise and authority side, Lyfe Forge’s source scoring engine tilts heavily toward academic research, government reports, and reputable industry bodies. That gives your content the same sort of backbone you would expect from a subject‑matter expert doing thorough research by hand. When articles quote or summarise these sources, they signal that your brand is anchored in recognised knowledge, not just opinion or hearsay.

Experience and trust are handled through the Client DNA Vault and transparent citation practices. The DNA Vault stores your brand voice, preferred terms, local examples, and genuine author details, so the copy feels like it comes from a specific person or team, not a generic model. At the same time, every important factual claim is traceable back to a real source that readers could check if they wished. That openness is a core part of trust, especially for Australian audiences who are increasingly wary of vague AI‑generated advice without clear backing. Independent guides on E‑E‑A‑T are clear on this point: you build long‑term visibility by combining genuine expertise, lived or professional experience, and clear sourcing into one consistent package, rather than trying to game any single signal. Lyfe Forge’s integrated fact‑checking and brand‑aware writing make that combination much easier to achieve at scale. searchenginejournal.com

Why Fact‑Checked SEO Content Matters More in Australia

For Australian brands, factual accuracy in SEO content is not only a search issue; it can also cross into regulatory territory. Misleading or deceptive statements about products, pricing, or results can still breach Australian Consumer Law, even when an AI system drafts the wording in the first place. Health, financial, and legal content can trigger extra scrutiny from bodies such as the TGA or ASIC when claims drift beyond what the evidence supports.

At the same time, local regulators are paying close attention to misinformation and AI. Reports from the ACCC, Treasury and parliamentary committees highlight risks from opaque algorithms, manipulative design and emerging AI systems, including concerns about dark patterns, fake reviews and a lack of transparency around how platform algorithms operate. While those documents largely focus on big tech platforms, they also send a clear signal to businesses: you remain responsible for what appears under your brand domain, no matter which tools you used to create it.

That environment makes robust fact‑checking an important piece of risk management, not just an SEO optimisation tactic. By building verification into its core pipeline, Lyfe Forge helps reduce the odds that a stray AI hallucination in a blog post turns into a complaint, a takedown request, or a trust‑damaging social media thread. It also supports a more transparent internal process, where you can show exactly how key claims were sourced and checked if questions arise later. Given the direction of Australian regulation around online disinformation, any system that logs sources, flags weak claims, and encourages corrections is better aligned with where the law – and public expectations – are heading. acma.gov.au

Practical Ways to Use Lyfe Forge for Safer, More Useful SEO Content

Putting Lyfe Forge to work effectively is less about pressing a magic button and more about shaping a sensible workflow. One starting point is to define clear content tiers. For low‑risk, evergreen topics – think basic how‑tos or company updates – you might lean heavily on the automated pipeline: multi‑engine research, claim‑level verification, constrained generation, then a light editorial pass focused on tone and branding, all orchestrated inside the AI content creation features that power the platform.

For higher‑risk or YMYL topics, it’s wise to add an explicit human review stage on top of Lyfe Forge’s QA. Let the platform handle the tedious parts – checking every number, date, and named entity against live sources – then bring in a subject‑matter expert to sanity‑check the narrative, nuance, and local relevance. Because the system already marks which claims are verified and which were too weak to use, experts can focus attention where it matters most, following structured approaches like those outlined in AI Writes, You Verify: Master Fact‑Checking for AI‑Generated Content.

Treat the verification logs as living documentation. When strategy or leadership teams ask, “Can we stand behind this claim?” you can point to the sources and checks that backed it. Over time, that habit encourages better internal standards, from product pages to blog posts. And because the same engine powers all of your articles, improvements in the verification stack – new source tiers, updated recency rules, better contradiction detection – flow into every new piece of content you create.

Conclusion: Choosing Fact‑Checked AI Over Guesswork

AI has made it far easier to produce SEO content at volume, but volume without verification is a liability. Lyfe Forge’s approach – source scoring, claim‑level checks, fact‑aware generation, and structured QA – turns AI from a guesswork machine into a disciplined research and writing assistant. That matters for rankings, for legal safety, and, most importantly, for reader trust.

If you’re comparing SEO content options, look past word counts and keyword lists. Ask how each platform handles facts, citations, and corrections when the web changes beneath your feet. LyfeForge vs Jasper, Copy.ai & others is ultimately a question of whether a tool bakes verification into the core or bolts it on at the end. Lyfe Forge was built for teams that want speed and scale without sacrificing rigour, with clear pricing plans, a transparent Terms of Service, and a privacy‑first stance laid out in its Privacy Policy and Cookie Policy. If that sounds like you, now is the right time to explore a workflow where every important claim is checked before it ever reaches your audience, starting with a free trial on the Lyfe Forge platform or by reaching out via the contact page after getting to know the team on the About Lyfe Forge page or modelling your savings with the Content ROI Calculator. https://blog.google/products/search/overview-our-rater-guidelines-search/

Frequently Asked Questions

What is Lyfe Forge and how does it create trustworthy SEO content?

Lyfe Forge is an AI-powered content platform that combines research, writing, and fact checking into a single workflow to produce SEO content you can verify and trust. It scores sources for authority, recency, and relevance, verifies claims using multiple AI engines, and then forces its writing engine to stick to what’s been factually confirmed.

How does Lyfe Forge’s fact checking technology work?

Lyfe Forge queries multiple engines in parallel (including OpenAI, Google Gemini, Perplexity, Grok, and Brave Search) to gather candidate information and sources. It then scores these sources, cross-checks individual claims across them, and only allows content to be generated from information that passes its verification and quality checks.

How does Lyfe Forge decide which sources to trust for SEO content?

Lyfe Forge uses a weighted scoring system that prioritises authority, recency, and topical relevance. Government domains (.gov, .gov.au) and peer‑reviewed or academic sources receive the highest trust, followed by major news wires, while general commercial sites sit lower in the hierarchy unless they’re highly relevant and reputable.

How does Lyfe Forge help with Google E‑E‑A‑T and helpful content guidelines?

Lyfe Forge is designed around Google’s emphasis on Experience, Expertise, Authoritativeness, and Trustworthiness by grounding content in authoritative, recent, and clearly sourced information. Its built‑in fact checking and quality layer align with Google’s guidance on helpful, reliable content, which can support stronger E‑E‑A‑T signals for your pages.

What makes Lyfe Forge different from just using ChatGPT or other AI tools for content?

Generic AI tools can write fluent copy but don’t reliably verify facts or enforce source quality, which can lead to confident-sounding errors. Lyfe Forge adds a dedicated research and verification pipeline, multi‑engine cross‑checking, and fact‑check‑aware generation so the model cannot easily “make things up” or ignore evidence quality.

Can Lyfe Forge reduce AI hallucinations in my blog posts and landing pages?

Yes, minimising hallucinations is a core design goal of Lyfe Forge. By validating claims against multiple engines and only allowing high‑scoring, corroborated sources to feed the writing stage, it sharply reduces the risk of invented statistics, fake studies, and misleading statements slipping into your content.

How does Lyfe Forge balance speed and accuracy for SEO content production?

Lyfe Forge automates research, fact checking, and SEO optimisation in one pipeline so you don’t have to run separate tools or manual reviews for each step. This lets you publish content at modern content-calendar speeds while still maintaining strict controls over factual accuracy and source quality.

Does Lyfe Forge check SEO elements as well as facts?

Yes, after the fact checking and source scoring, Lyfe Forge runs a quality layer that evaluates readability, SEO alignment, and factual integrity together. This means on-page elements like structure, headings, and keyword intent are optimised without sacrificing accuracy or trustworthiness.

Can Lyfe Forge integrate with my current content strategy or agency workflow?

Lyfe Forge is built to slot into existing content workflows by handling the research and draft creation phase with its fact‑checking engine, while still allowing human editors and strategists to refine tone and messaging. Agencies and in‑house teams can use it to standardise quality checks across all articles and scale content production without losing trust.

Is Lyfe Forge suitable for highly regulated or technical industries?

Lyfe Forge is particularly useful for industries where incorrect information can be costly, such as finance, health, law, and government. Its focus on authoritative domains, peer‑reviewed research, and explicit claim verification helps keep content aligned with current regulations and best-practice guidance, though final compliance review should still be done by human experts.